Physical AI Podcast & Summit | Managing Partner, F50

February 13, 2026

Executive Summary

A new narrative is emerging around the xAI–SpaceX pairing: if AI’s scaling bottleneck becomes terrestrial energy, cooling, land, and grid interconnect, then the long-run “escape hatch” is to move portions of AI infrastructure off-planet—first to orbit, then to lunar manufacturing for truly extreme scale. Elon Musk has described this as a pathway from Earth-orbital data centers (100–200 GW/year) to eventually terawatt-per-year space-based compute, and then “beyond a terawatt” via Moon factories and a lunar mass driver.

This is not just rhetoric: SpaceX’s Orbital Data Center application—now formally accepted for comment by the Federal Communications Commission—seeks authority for a new non‑geostationary system “of up to one million satellites,” operating across shells from ~500 km to 2,000 km altitude, and relying heavily on optical inter-satellite links.

In this newsletter, Physical AI means the autonomy stack that makes those ambitions operational: embedded AI for spacecraft thermal/power management, swarm ops, collision avoidance, robotic lunar construction, and—on the software side—agentic systems (“Macrohard”) aimed at doing end-to-end engineering work on real aerospace hardware.

Orbital Data Centers and Space-Based Compute

SpaceX’s filing frames orbital data centers as a response to accelerating AI-driven demand for electricity—and describes orbital compute as enabled by near-constant solar power plus radiative heat rejection into vacuum. The company’s narrative gives an explicit scaling arithmetic: launching 1 million tonnes/year of satellites at ~100 kW of compute per tonne would add ~100 GW of AI compute capacity annually (as written in the application narrative). The FCC public notice also details conceptual networking: optical links routing traffic within the orbital system and interfacing with Starlink’s laser mesh to reach authorized earth stations.

The key Physical AI question is not “can you put GPUs in space,” but how you operate a compute-optimized spacecraft fleet as a safety-critical cyber-physical system. Within SpaceX’s own narrative and standard spacecraft engineering constraints, the near-term operational use cases look like:

Autonomous thermal and power orchestration. In orbit, heat is rejected primarily by radiation (not convection), which turns “cooling” into a continuous optimization problem over radiator area, attitudes, duty cycles, and orbital lighting. A Physical AI controller would schedule training/inference loads against instantaneous thermal capacity and solar power availability.

Optical-network control as “compute scheduling.” If the constellation relies on optical inter-satellite links and a laser mesh, then traffic engineering becomes part of model performance: minimizing crosslinks for training all‑reduce, locality-aware placement of inference, and graceful degradation under link loss.

Collision avoidance and end-of-life autonomy. SpaceX’s narrative emphasizes automated collision avoidance and rapid deorbit for early failures at low altitudes, alongside debris‑mitigation compliance. This intersects directly with broader orbital-debris dynamics (collisional cascading) studied by NASA and peer agencies.

Capacity planning grounded in energy reality. The application itself cites International Energy Agency analysis on data-center electricity trajectories, underscoring why the energy bottleneck is central to the orbital-compute pitch.

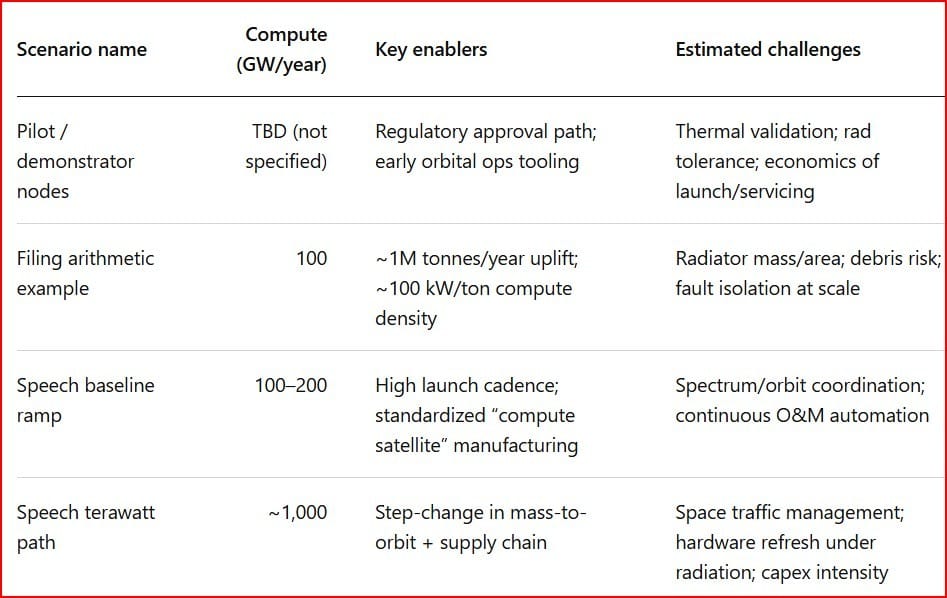

The capacity numbers being discussed publicly come from two places: SpaceX’s FCC narrative example and Musk’s “100–200 GW/year → ~1 TW/year path” remarks. A comparison, without inventing extra parameters:

AI Satellites and Reinforcement Learning in Space

In the all-hands content circulating publicly, an xAI RL/inference lead explicitly described running reinforcement learning training and production inference “at a large scale on the earth and probably soon in space,” with systems designed for resilience and scaling. That statement matters because it reframes “AI satellites” from a communications megaconstellation to a distributed learning + inference substrate—where orbit is not just hosting compute, but becoming part of the training environment.

Concrete Physical AI use cases—grounded in known space autonomy patterns and the constraints of downlink/latency—include:

On-orbit autonomy for data triage and closed-loop science. Jet Propulsion Laboratory notes that limits on returning scientific data create a bottleneck for future missions; onboard autonomy prioritizes what to transmit and when. A compute-rich orbital layer could extend that concept from single spacecraft to constellations.

RL for formation/constellation control under constraints. Recent peer-reviewed work applies deep RL methods to satellite formation attitude/control problems under multiple constraints—directly relevant to dense-shell operations where collision risk and propellant budgets are first-order.

Distributed learning under intermittent topology. Any “training in orbit” must cope with time-varying connectivity and bandwidth. Research on space edge computing and orbital network scheduling highlights why topology-awareness and data locality matter in LEO compute networks.

Radiation-aware reliability engineering (AI for AI hardware). Space radiation susceptibility (single-event effects, total ionizing dose, displacement damage) is a well-documented constraint for electronics in space. If SpaceX pursues non‑traditional, high‑performance compute hardware rather than fully “rad-hard” parts (a tradeoff discussed widely in space systems), then Physical AI would need to manage fault detection, checkpointing, and graceful degradation as a continuous operational discipline—especially at constellation scales.

Lunar Infrastructure and Mass Driver

Musk’s outline makes a clean inflection point: orbital compute may reach “a mere terawatt per year,” but to go beyond that, the roadmap shifts to Moon factories building AI satellites and a lunar mass driver launching payloads at high cadence. While this sounds novel, the enabling technologies have deep roots: NASA technical literature has studied lunar electromagnetic launch systems for in‑situ resource utilization (ISRU) transport, noting prior 1970s-era conclusions that mass drivers could be optimal for moving lunar materials compared to chemical rockets.

What does Physical AI do on the Moon? The answer is: everything that humans and conventional automation struggle to do under vacuum, dust, thermal extremes, and communications delay.

Autonomous excavation, construction, and ISRU operations. NASA’s lunar surface technology roadmapping explicitly targets autonomous excavation and construction using in‑situ resources, alongside scalable ISRU production and survival in extreme environments. If “Moon factories” are real, they require a robotic workforce that can mine, move, process, and assemble—end-to-end.

Energy-first manufacturing. Many lunar industrial concepts hinge on bootstrapping power generation (e.g., in-situ solar cell manufacturing) and producing consumables like oxygen from regolith. A lunar compute-satellite factory would likely need the same primitives: high-throughput materials handling, precision manufacturing, and continuous QA without human oversight.

Mass driver operations as a guidance-and-safety problem. A lunar electromagnetic launcher implies precision alignment, health monitoring, and trajectory assurance at high repetition rates. NASA and related studies highlight the engineering depth of electromagnetic launchers (power electronics, track alignment, payload dynamics). Physical AI would sit on top as the supervisory control layer: anomaly detection, predictive maintenance, launch scheduling, and “no-go” logic.

AI-Driven Rocket Design and Macrohard

“Muskonomy” visions aside, the all-hands transcript describes a concrete aspiration for “Macrohard”: a computer-using agent (“human emulator”) capable of doing what a human can do on a computer, including advanced engineering tools—and even “rocket engines fully designed by AI.” That is an explicit bridge between frontier AI and aerospace hardware development: agentic software acting directly on the digital tooling stack that produces physical flight systems.

To make that operational (not just inspirational), Macrohard-like systems would likely need to evolve into a verifiable “digital engineering” layer akin to, but more autonomous than, today’s digital twins:

Digital twins as the verification substrate. NASA’s Digital Twin literature defines digital twins as integrated, multi-physics, multi-scale simulations updated by data across a lifecycle, supporting decision-making and health/mission management. This is the natural “ground truth loop” for AI-generated designs: the agent proposes; the twin simulates; the physical tests feed back.

Propulsion-focused digital twin precedent. NASA-affiliated work on rocket engine digital twins (modeling + validation against tests) shows the direction of travel: higher-fidelity simulation stitched into operational decision support. A Macrohard agent would sit above this stack, automating parameter sweeps, failure-mode exploration, and documentation—while maintaining traceability.

Factory and test integration. If the goal is faster iteration, the agent must connect design outputs to manufacturing constraints and acceptance testing, then learn from yield and anomaly data. Digital-thread and lifecycle alignment is a known requirement in digital engineering programs; Macrohard’s distinguishing move would be automating that integration rather than merely modeling it.

Notably, specific technical milestones, timelines, or scope for “Macrohard” are not specified in public primary filings; what exists publicly today is directional language in meeting material and media reporting.

Understanding the Universe

The philosophical capstone in Musk’s outline is explicit: “to understand the universe you must explore the universe,” and the motivation for combining the two companies is accelerating exploration and “processing the data necessary to understand the cosmos.” From a Physical AI lens, this is less about poetry and more about architecture:

Autonomy is how science scales beyond downlink. JPL’s autonomy programs emphasize that future planetary science will produce orders of magnitude more data than can be returned, making onboard decision-making and prioritization essential. NASA also highlights that onboard AI can identify regions of interest to avoid burying urgent information in bulk transmissions.

Space edge compute reduces latency and bandwidth pressure. Research on “space edge computing” and onboard AI argues for processing data near collection points, reducing the amount that must traverse limited links to ground. An orbital compute layer could generalize this: not only spacecraft-local autonomy, but constellation-level aggregation, filtering, and model updates.

The “Physical AI flywheel” thesis. If orbital compute exists, it can (in principle) train and validate autonomy systems in the same environment they must survive—closing a loop between the physics of space operations and the models that control them. That is consistent with the stated intent to run RL training and inference at scale “soon in space,” and with space-system needs for reliability engineering under failure.

Strategic implications for founders and investors (callout)

The FCC process is now a gating item: orbital compute at scale becomes as much a regulatory/orbital-governance problem as a hardware problem.

“Cooling” becomes a product category: radiators, thermal materials, and thermal autonomy are central constraints for space compute density.

AI infrastructure financing will increasingly track energy and grid constraints, which is why “energy-first” strategies (including off-world) resonate.

If lunar industry is pursued, ISRU, autonomous construction, and industrial robotics become the long pole—not the rockets alone.

Agentic engineering (“Macrohard”) only creates durable value if it plugs into validated digital-twin/digital-thread processes that make designs certifiable and buildable.

Recommended reading (Sources)

SpaceX Orbital Data Center System narrative (PDF): https://cdn.geekwire.com/wp-content/uploads/2026/01/SpaceX-Center.pdf

FCC Public Notice (DA-26-113A1, text): https://docs.fcc.gov/public/attachments/DA-26-113A1.txt

IEA (2025) “Energy and AI” (landing page): https://www.iea.org/reports/energy-and-ai

IEA (2025) “Energy and AI” (PDF): https://iea.blob.core.windows.net/assets/dd7c2387-2f60-4b60-8c5f-6563b6aa1e4c/EnergyandAI.pdf

NASA NTRS: “A Lunar Electromagnetic Launch System for In‑Situ Resource Utilization” (PDF): https://ntrs.nasa.gov/api/citations/20110007073/downloads/20110007073.pdf

#SpaceTech #PhysicalAI #AIInfrastructure #Autonomy #Orbita